Chapter 4: Alignment Science

This chapter contains a variety of different exercises. Most of them don’t fit neatly into either interpretability or evals, and all of them are bear directly on real AI safety research. This chapter's grouping was loosely inspired by Anthropic’s Alignment Science research thread, which you can read here.

Emergent Misalignment

Emergent Misalignment (EM) was discovered in early 2025, mostly by accident. The authors of a paper on training insecure models to write unsafe code noticed that their models actually reported very low alignment with human values. Further investigation showed that these models had learned some generalised notion of misaligned behaviour just from being trained on insecure code, and this was spun off into its own paper.

In these exercises, we'll be going through many results from Soligo and Turner’s papers on EM. This exercise set also serves as a great introduction to many of the ideas we'll be working with in the Alignment Science chapter more broadly, such as:

How to write good autoraters (and when simple regex-based classifiers might suffice instead),

What kinds of analysis you can do when going ‘full circuit decomposition’ (like in Indirect Object Identification) isn’t a possibility,

How LoRA adapters work, and how they can be a useful tool for model organism training and study,

Various techniques for steering a model’s behaviour.

Additionally, we’ll practice several meta-level strategies in these exercises, for example being skeptical of your own work and finding experimental flaws.

Science of Misalignment

The key problem we’re trying to solve in Science of Misalignment can be framed as:

If a model takes some harmful action, how can we rigorously determine whether it was scheming, or just confused (or something else)?

If it’s scheming then we want to know, so we can design mitigations and make the case for pausing deployment. If it’s actually just confused, we don’t want to pull the fire alarm unnecessarily or waste time on mitigations that aren’t needed. (Confused behaviour can still be bad and worth fixing, but it’s not the kind of thing we should be most worried about.)

To try and answer this, we often set up environments (system prompt + tools) for an LLM and study the actions it takes. We generate hypotheses and test them by ablating features of the environment. Even more than in other areas of interpretability, skepticism is important here: we need to consider all the reasons our results might be misleading, and design experiments to test those reasons.

In these exercises, we’ll look at 2 case studies: work by Palisade & follow-up work by DeepMind on shutdown resistance, and alignment faking (a collaboration between Anthropic and Redwood Research).

Interpreting Reasoning Models

Modern reasoning models produce very long chain-of-thought traces, so we need to think about serialised computation over many tokens, not just over layers. This requires a new abstraction.

The Thought Anchors paper introduces this abstraction by splitting reasoning traces by sentence. Sentences are more coherent than tokens and correspond more closely to the actual reasoning steps. Some sentences matter a lot more than others for shaping the reasoning trajectory and the final answer; the authors call these thought anchors. They can be identified using black-box methods (resampling rollouts) or white-box methods (looking at / intervening on attention patterns). In these exercises, we’ll work through both.

LLM Psychology & Persona Vectors

If we want to study the characteristics of current LLMs which might have alignment relevance, we need to use a higher level of abstraction. LLMs often exhibit ‘personas’ that can shift unexpectedly - sometimes dramatically (see Sydney, Grok's MechaHitler persona, or AI-induced psychosis). These personalities are clearly shaped through training and prompting, but exactly why remains a mystery.

In this section, we’ll explore one approach for studying these kinds of LLM behaviours: model psychiatry. This sits at the intersection of evals (behavioural observation) and mechanistic interpretability (understanding internal representations / mechanisms). We aim to use interp tools to understand & intervene on behavioural traits.

Investigator Agents

Suppose you want to know whether a model will reinforce a user’s delusional beliefs over a multi-turn conversation. You could test this manually: roleplay as a patient, escalate gradually, see what happens. But testing 50 models across 100 scenarios this way is infeasible, and single-turn prompts often miss the interesting behaviors entirely (models are well-trained to refuse simple harmful requests, but multi-turn pressure can erode those boundaries).

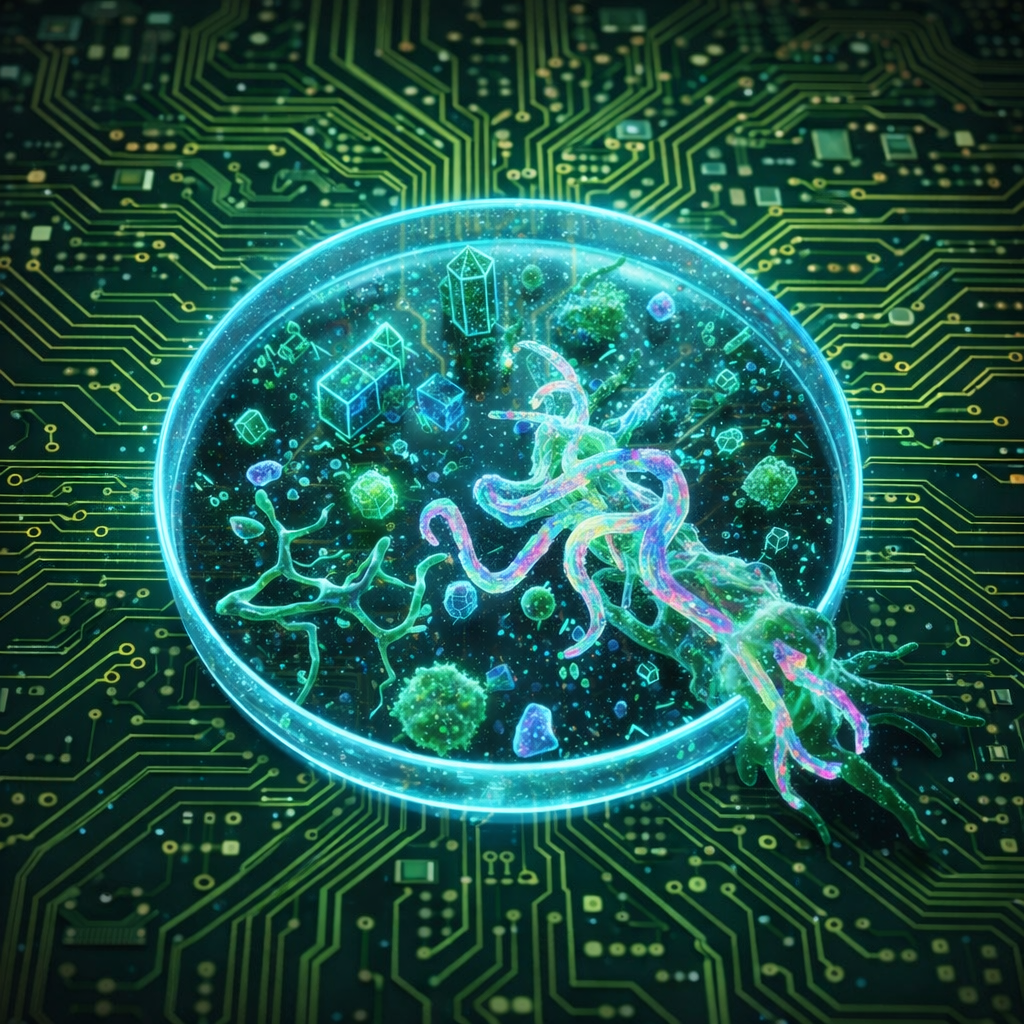

Investigator agents automate this. They’re LLM-powered systems that probe other LLMs through multi-turn interactions, discovering behaviors that single-turn evals miss. This section starts by building a red-teaming pipeline by hand (using the AI psychosis case study), then shows how what you built is a simplified version of Anthropic’s Petri framework. From there you'll use Petri’s actual API, extend it with custom tools, and build components from Petri 2.0.